Teutonic Subnet on Bittensor begins decentralized training of an 80B AI model, marking the largest public AI training effort on-chain.

Author: Akshat Thakur

May 11, 2026- The Teutonic Subnet on Bittensor has officially started training an 80-billion-parameter AI model through decentralized infrastructure, a move community members are calling the largest decentralized AI training effort ever attempted.

High Signal Summary For A Quick Glance

Vex

@vex020900

@const_reborn The loss is currently around 3, and I believe we can bring it close to 0.1 soon. https://t.co/ijkw0K0dRe

https://t.co/CGODfuW8tc Training 80

12:51 PM·May 11, 2026

leet

@0xleet

@const_reborn Does this mean SN3 is now Teutonic? am I understanding this correctly

https://t.co/CGODfuW8tc Training 80

11:51 AM·May 11, 2026

Joe Edwards

@JMEimagine

@const_reborn It’s great… but Sam can still pull more out right??? Until he’s fully removed, could we not copy and paste all this into a new slot? Open 129 for it or something ?🤷♂️

https://t.co/CGODfuW8tc Training 80

11:36 AM·May 11, 2026

High attention and emotional sentiment detected.

Teutonic is a subnet operating on Bittensor. Bittensor is a decentralized machine intelligence network where miners and validators collaborate to build AI systems.

Each subnet focuses on a different AI workload. Some handle text generation, while others focus on image synthesis or inference tasks. Teutonic, also known as SN3, now focuses on large-scale model training.

The subnet was previously linked to Covenant AI’s Templar subnet. It later shifted under new leadership toward frontier-scale decentralized training.

Unlike earlier subnet experiments, Teutonic is attempting to coordinate training for a full 80B parameter model across distributed compute providers.

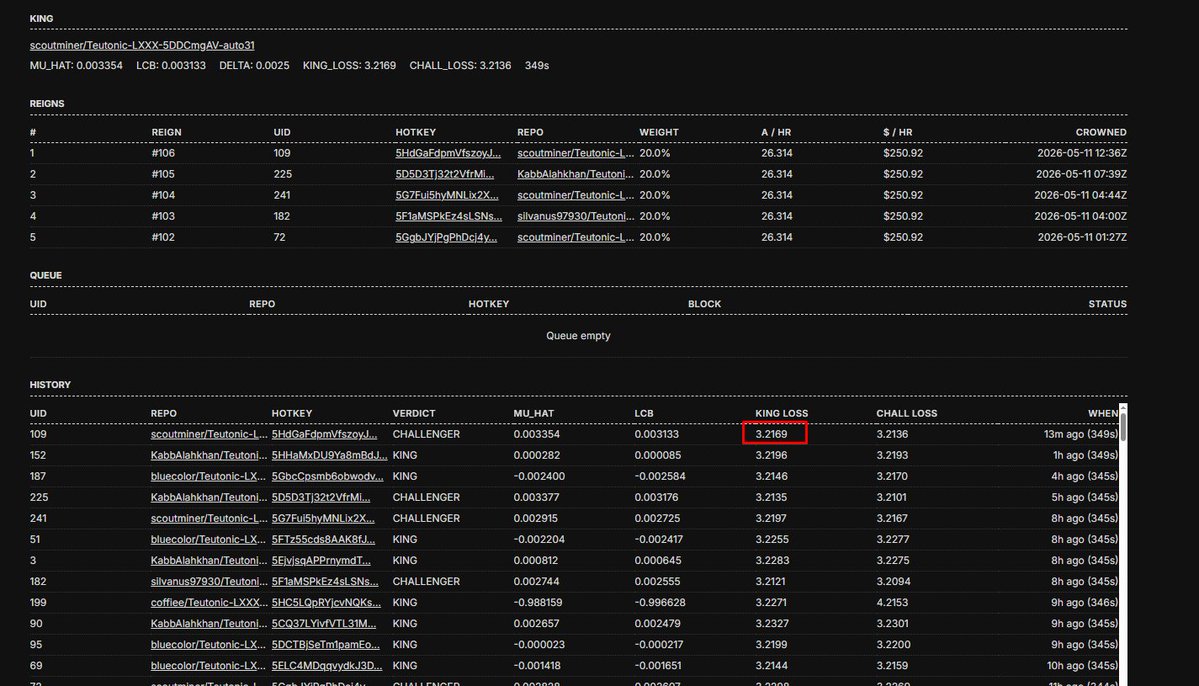

The public dashboard shows active reigns, challengers, model loss metrics, and subnet payouts in real time.

“We construct the loss landscape as a market: Miners compete to win the sequence of updates which take the loss lower,” Steeves wrote.

The Teutonic system runs through a continuous “king-of-the-hill” competition between miners.

Participants upload candidate model checkpoints linked to their Bittensor hotkeys through Hugging Face repositories. Validators then compare those checkpoints against the current “KING” model.

The scoring system uses perplexity loss evaluations on a custom dataset called Hippius. If a challenger performs better, it immediately replaces the current KING.

The winning model then receives the majority of subnet emissions.

According to Steeves, onboarding has been simplified for participants. Miners can connect through compute keys like LIUM or Targon while the system handles the remaining coordination.

The process operates without a centralized coordinator. Instead, market incentives determine which updates survive.

Training an 80B parameter AI model requires massive GPU resources and coordination. Most frontier AI systems today are trained by centralized companies with private infrastructure.

The Teutonic experiment aims to prove that open economic incentives can coordinate compute at a similar scale.

For Bittensor, this launch represents a major milestone. It pushes the network beyond smaller experiments into workloads closer to those handled by major AI labs.

If successful, the effort could attract more miners, validators, and institutional attention toward decentralized AI infrastructure.

Higher participation may also increase demand for TAO emissions and subnet compute access.

The timing is also important. Bittensor has spent recent months dealing with governance disputes and subnet restructuring debates. Teutonic’s training run now acts as one of the network’s largest public demonstrations of renewed momentum.

The decentralized training process is expected to continue for weeks or possibly months. Miners will continue competing to improve model performance and reduce loss scores.

Successful checkpoints could later become open-source or integrate into other Bittensor subnets for inference services.

The experiment may also influence future governance discussions around subnet emissions, ownership structures, and compute allocation. For now, the training remains fully public and continuously active. Every reign, challenge, and loss update is visible through the dashboard.

This article is for informational purposes only and does not constitute financial advice. Always do your own research before making investment decisions.

Our Crypto Talk is committed to unbiased, transparent, and true reporting to the best of our knowledge. This news article aims to provide accurate information in a timely manner. However, we advise the readers to verify facts independently and consult a professional before making any decisions based on the content since our sources could be wrong too. Check our Terms and conditions for more info.

XYO AI SDK Brings Vibe Coding On-Chain for First Time

ShapeShift FOX Colony Drained for $137K in Arbitrum Exploit

OP Enterprise Launches as EtherFi Moves $220M to OP Mainnet

Solana P-Token Upgrade Cuts Token Compute Costs by Up to 98%

XYO AI SDK Brings Vibe Coding On-Chain for First Time

ShapeShift FOX Colony Drained for $137K in Arbitrum Exploit

OP Enterprise Launches as EtherFi Moves $220M to OP Mainnet

Solana P-Token Upgrade Cuts Token Compute Costs by Up to 98%